Rohit Vartak

Research Assistant, MBZUAI (May 2026 –) | LLMs, AGI

Masdar City, Abu Dhabi

United Arab Emirates

I am a Research Assistant at MBZUAI (May 2026 –), where I am advised by Prof. Praneeth Vepakomma.

My research focuses on reasoning, robustness, and efficiency in large language models (LLMs). Broadly, I study how models reason and how to design methods that improve reasoning while remaining scalable in real-world settings.

My work spans several areas, including:

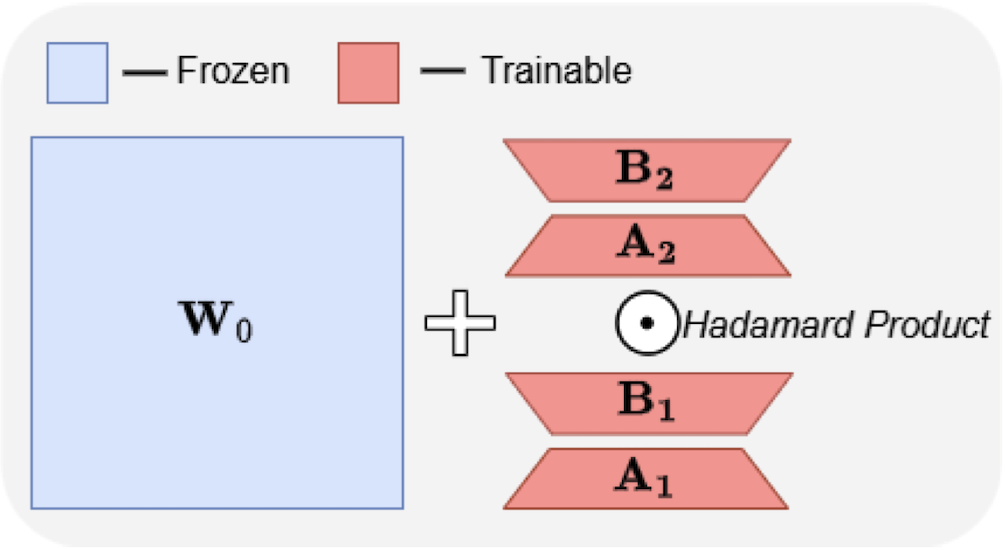

- Parameter-efficient fine-tuning, improving expressivity and adaptability of large models

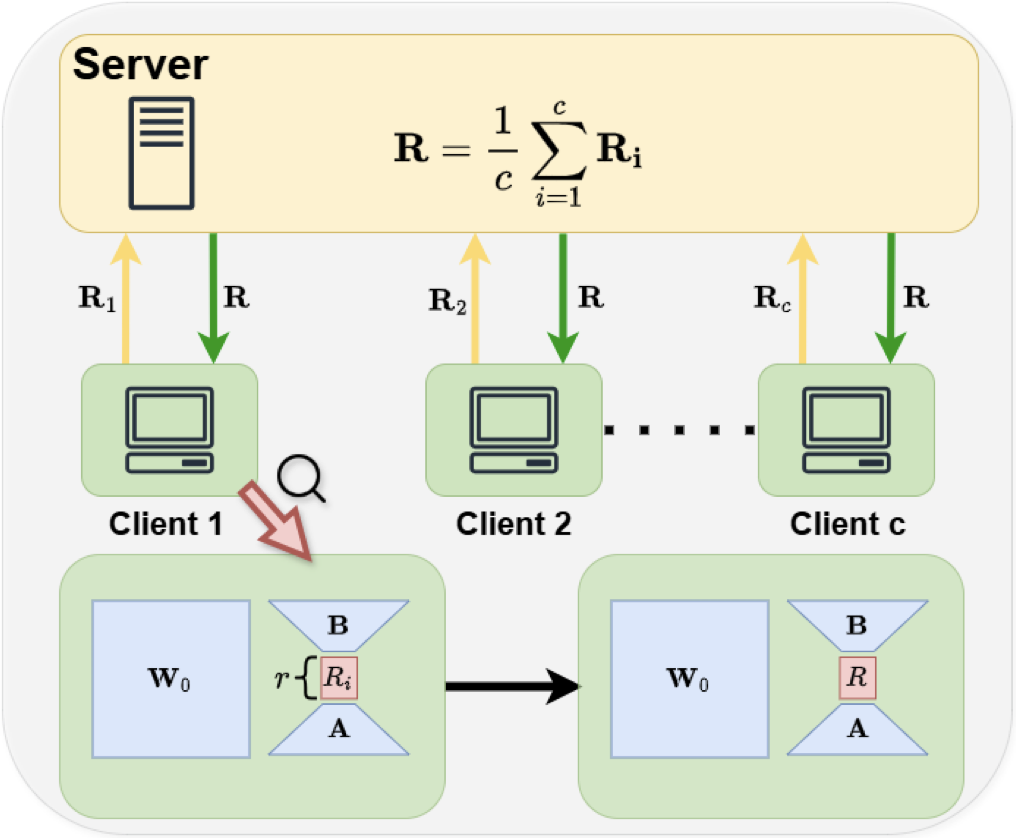

- Federated learning for LLMs, with an emphasis on communication-efficient training

- (Multimodal) reasoning, studying how models process and reason over visual and textual inputs

- LLM applications in healthcare, focusing on real-world user interaction with medical chatbots

Prior to this, I completed my M.S. in Computer Science at Duke University, where I worked with Prof. Bhuwan Dhingra on the mathematical reasoning capabilities of multimodal LLMs.

During this time, I also interned with Prof. Praneeth Vepakomma at MBZUAI (Summer 2025), working on efficient and scalable training methods for large models, and collaborated with Prof. Monica Agrawal on medical chatbots and their real-world usability.

Before this, I completed my B.Tech in Electrical Engineering and M.Tech in Artificial Intelligence and Data Science at IIT Bombay.

news

| Apr 08, 2026 | Successfully defended my M.S. degree (course-only track) at Duke University |

|---|---|

| Mar 04, 2026 | Our paper “Fed-SB: A Silver Bullet for Extreme Communication Efficiency and Performance in (Private) Federated LoRA Fine-Tuning” has been accepted at TMLR 2026. |

| Jan 20, 2026 | Our paper “ABBA-Adapters: Efficient and Expressive Fine-Tuning of Foundation Models” has been accepted at ICLR 2026. |