project 4

another without an image

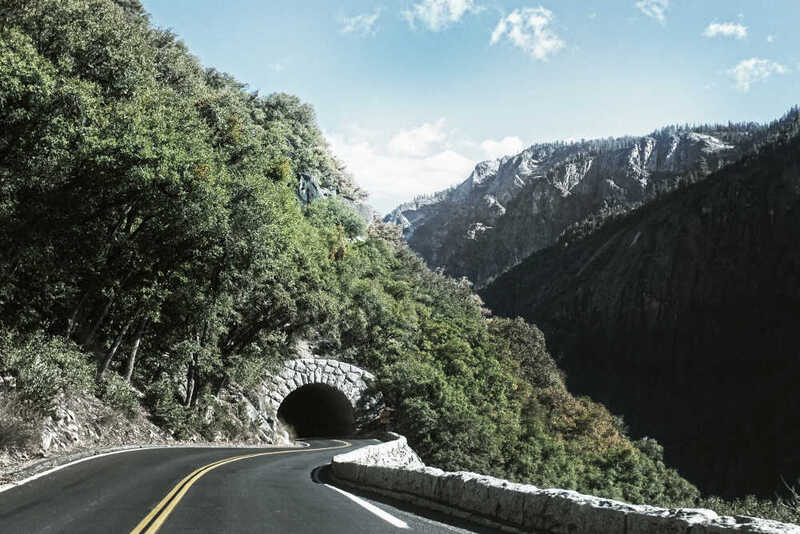

Every project has a beautiful feature showcase page. It’s easy to include images in a flexible 3-column grid format. Make your photos 1/3, 2/3, or full width.

To give your project a background in the portfolio page, just add the img tag to the front matter like so:

---

layout: page

title: project

description: a project with a background image

img: /assets/img/12.jpg

---

You can also put regular text between your rows of images. Say you wanted to write a little bit about your project before you posted the rest of the images. You describe how you toiled, sweated, bled for your project, and then… you reveal its glory in the next row of images.

The code is simple. Just wrap your images with <div class="col-sm"> and place them inside <div class="row"> (read more about the Bootstrap Grid system). To make images responsive, add img-fluid class to each; for rounded corners and shadows use rounded and z-depth-1 classes. Here’s the code for the last row of images above:

<div class="row justify-content-sm-center">

<div class="col-sm-8 mt-3 mt-md-0">

{% include figure.liquid path="assets/img/6.jpg" title="example image" class="img-fluid rounded z-depth-1" %}

</div>

<div class="col-sm-4 mt-3 mt-md-0">

{% include figure.liquid path="assets/img/11.jpg" title="example image" class="img-fluid rounded z-depth-1" %}

</div>

</div>